Foul fabrication

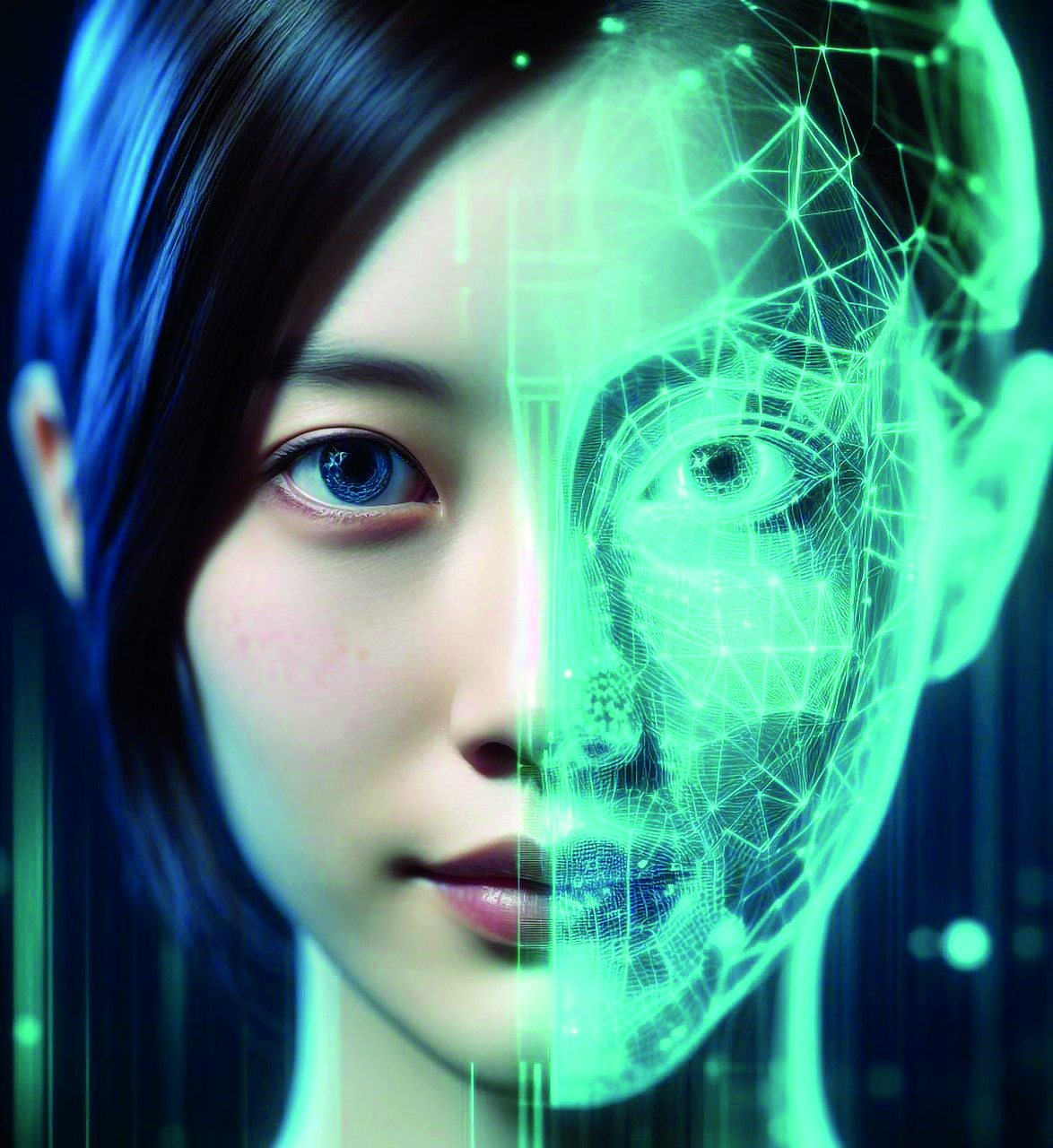

With the ramifications of deepfake technology unfolding in the practical world, a sense of fear prevails — necessitating the need for meticulous caution and public awareness

Recently, a deepfake video of an Indian actor, Rashmika Mandanna, went viral on social media. This has led to a furore about the impact of AI that can be used to convincingly create fake videos of celebrities or even people we know in our day-to-day lives.

What are deepfakes?

Deepfakes (a portmanteau of “deep learning” and “fake”) means the use of artificial intelligence (AI) and deep learning techniques in order to create or manipulate audio and video content, often with the intention of deceiving viewers or making it appear as though someone is saying or doing something they did not.

By overlaying the speech and facial expressions that they have learnt onto pre-existing video material or by producing wholly new content, the AI models are able to produce incredibly convincing and realistic false content. A video of a well-known person dancing to a song or giving a modified statement is available. It’s similar to masking the person in the video and forcing them to say whatever the deepfake’s author desires.

Psychological impact on the person:

* Cyberfear: The feeling of “cyberfear” refers to the anxiety, concern, or fear that individuals or organisations may experience regarding potential threats

and risks in the digital or cyber realm;

* Spreading misinformation: Deepfakes have the potential to spread misinformation on a large scale when used against a famous public figure;

* Manipulation: False narratives can be spread during political campaigns to influence and manipulate people in large numbers;

* Blackmail: An individual can be subjected to blackmail by another person by holding such footage hostage;

* Lack of trust: Deepfakes can erode trust in visual and audio media. It will get harder to trust what you see online;

* Identity theft and cheating: A common misuse can be to create fake IDs or fake visual scanning techniques for stealing and cheating;

* Public figures: The people at biggest risk are those who are famous. The chances of misuse against them can affect their security and image by creating public confusion in distinguishing between genuine and manipulated media.

How can you spot one?

* Inconsistencies in eye and mouth movements, even if subtle;

* Audio-visual discrepancies between the words spoken and being enunciated;

* Source verification is a must for important, relevant content;

* Context must be considered when seeing a video. A public political figure suddenly belting out an inappropriate dance should raise some suspicion;

* Deepfake detection tools to pick up videos are here.

* Fun fact: In a study about how well people can detect deepfake with a naked eye, participants often overestimated their abilities by a lot. The vast majority of respondents showed high confidence in their abilities to detect deep fakes despite being wrong. (Köbis, Doležalová, & Soraperra, 2021).

What can we do to prevent this?

* Awareness: Education and awareness are a must, especially for the newer generation;

* Avoid spreading or forwarding such malicious content;

* Legislation needs to be in place for these;

* Technological solutions and advancements are needed.

* Current legalities in India: Deepfakes aren’t directly recognised under Indian law; the issue is indirectly addressed via Section 66E of the IT Act, which makes it illegal to capture, publish, or transmit someone’s image in the media without that person’s consent.

Tips from a mental health expert:

* Media literacy: Encourage everyone to eye content with suspicion and do multiple research and fact-checks rather than trusting one source;

* Privacy online: Be mindful of not posting content online that can land you in a hot soup about self or others;

* Stay informed and updated: Stay abreast of issues, technology, and their possible solutions;

* Be mindful, and don’t believe everything you see online.

Famous people and their viral deepfakes:

* Barack Obama: In 2018, a deepfake video featuring former US President Barack Obama was created to raise awareness about the potential dangers of deepfake technology.

* Mark Zuckerberg: It was created as part of an art installation to highlight the risks associated with deepfake technology.

* Gal Gadot: A pornographic deepfake video of the actress’s explicit content

circulated on the internet, clearly depicting the potential misuse of deepfake technology for creating non-consensual and harmful content.

Send your questions to [email protected]